Education 2.0 Conference Highlights Scam Offenses Linked To Deepfake Educators

Posted on : February 23, 2026

AI-generated educators are becoming a visible part of online learning, offering scale and convenience while raising new questions. As deepfake teaching tools blur the line between human and synthetic instruction, concerns around transparency, trust, and scam offenses are prompting closer scrutiny across digital education environments

What if the educator teaching your next online course was entirely AI-generated? As digital education continues to evolve, artificial intelligence is no longer limited to background tools. AI-generated instructors are now appearing in online courses and virtual classrooms, often without learners realizing they may be engaging with synthetic educators rather than human teachers.

These changes are prompting deeper conversations across the education sector. At the Education 2.0 Conference, experts have highlighted scam offenses linked to deepfake educators and growing transparency gaps. This shift at our education conference opens the door to a closer look at how AI-generated instruction is influencing trust, accountability, and learner decision-making across digital education. Let's get started.

TL;DR — Key Takeaways

- See how AI-generated educators are entering online classrooms.

- Understand how weak disclosure fuels scam offenses.

- Spot risks tied to fake instructors and credentials.

- Hear what experts at the Education 2.0 Conference highlight.

- Learn why verification and fraud monitors matter.

- Build awareness to protect trust in digital learning.

How Artificial Intelligence Is Blurring Educational Authenticity

Artificial intelligence can now replicate the tone, expressions, and teaching styles of real educators with striking accuracy. From lifelike video lectures to polished voiceovers, AI-generated instruction often mirrors traditional classroom delivery so closely that learners may not question who is actually behind the lesson.

As synthetic educators follow familiar academic formats, the line between genuine and generated instruction continues to blur. Limited disclosure makes it harder to distinguish authentic teaching from AI-created content, creating space for scam offenses linked to misrepresentation. These concerns were highlighted at our EdTech summit, where experts are examining how unchecked use of synthetic instruction can gradually erode trust in digital education.

Why AI-Generated Teachers Are Becoming More Common

The demand for flexible, scalable education has made AI-generated instruction increasingly appealing to digital learning platforms. Synthetic teachers enable continuous content delivery, reaching learners across regions, time zones, and learning formats without interruption.

Platforms are turning to these tools because they:

- Accelerate content deployment, allowing institutions to launch courses and updates across multiple programs far more quickly than traditional production cycles.

- Lower long-term operational costs while maintaining a consistent instructional experience for large and diverse learner groups.

- Support large-scale online learning demands, helping platforms keep pace with growing enrollment without expanding faculty at the same rate.

When speed and scale begin to outweigh transparency, accountability boundaries become less clear. This creates conditions in which scam offenses tied to synthetic instruction can gradually surface. Experts at the Education 2.0 Conference have emphasized the growing role of fraud monitors in identifying misuse early, particularly as AI adoption in digital education continues to expand.

How Weak Safeguards Enable Misuse of AI-Generated Educators

At their best, AI-generated educators are designed to support learning, not replace trust. Problems arise when safeguards around identity, credentials, and disclosure fail to keep pace with adoption. In these gaps, synthetic instructors can be presented as real educators, complete with fabricated qualifications or professional histories that learners have little ability to verify.

Misuse commonly appears in several areas:

- AI-generated instructor profiles that depict synthetic educators as qualified faculty members, using convincing biographies, imagery, and teaching credentials to establish false credibility.

- Courses led by AI-generated teachers that are promoted alongside misleading or unverifiable certifications, leaving learners uncertain about the legitimacy of outcomes or academic recognition.

- Expert talks created using synthetic media without proper validation, presenting simulated expertise as authentic thought leadership.

When oversight remains limited, synthetic instruction can shift from innovation to misrepresentation. This risk continues to be examined at our education conference, where experts discuss scam offenses tied to weak safeguards and emphasize the need for clearer verification practices as AI-driven education models continue to expand.

Why Questions Of Authenticity Matter To Learners & Institutions

Learners make important educational decisions based on perceived instructor credibility. Time, money, and personal data are invested with the expectation that guidance comes from legitimate sources.

Unchecked scam offenses can lead to:

- Reduced confidence in digital learning platforms and credentials.

- Institutional hesitation to partner with EdTech providers lacking transparency.

- Gradual erosion of trust across the education ecosystem.

These long-term consequences explain why authenticity and scam offenses are increasingly addressed in strategic conversations at our EdTech summit.

What Experts Are Paying Attention To Now

As concerns around synthetic instruction grow, education leaders are focusing on balance rather than resistance. Conversations at our EdTech summit reflected a shared understanding that innovation must move forward alongside accountability, particularly as scam offenses linked to AI-generated instruction become more sophisticated.

Experts are paying close attention to:

- Clear disclosure when AI-generated educators are used in learning environments.

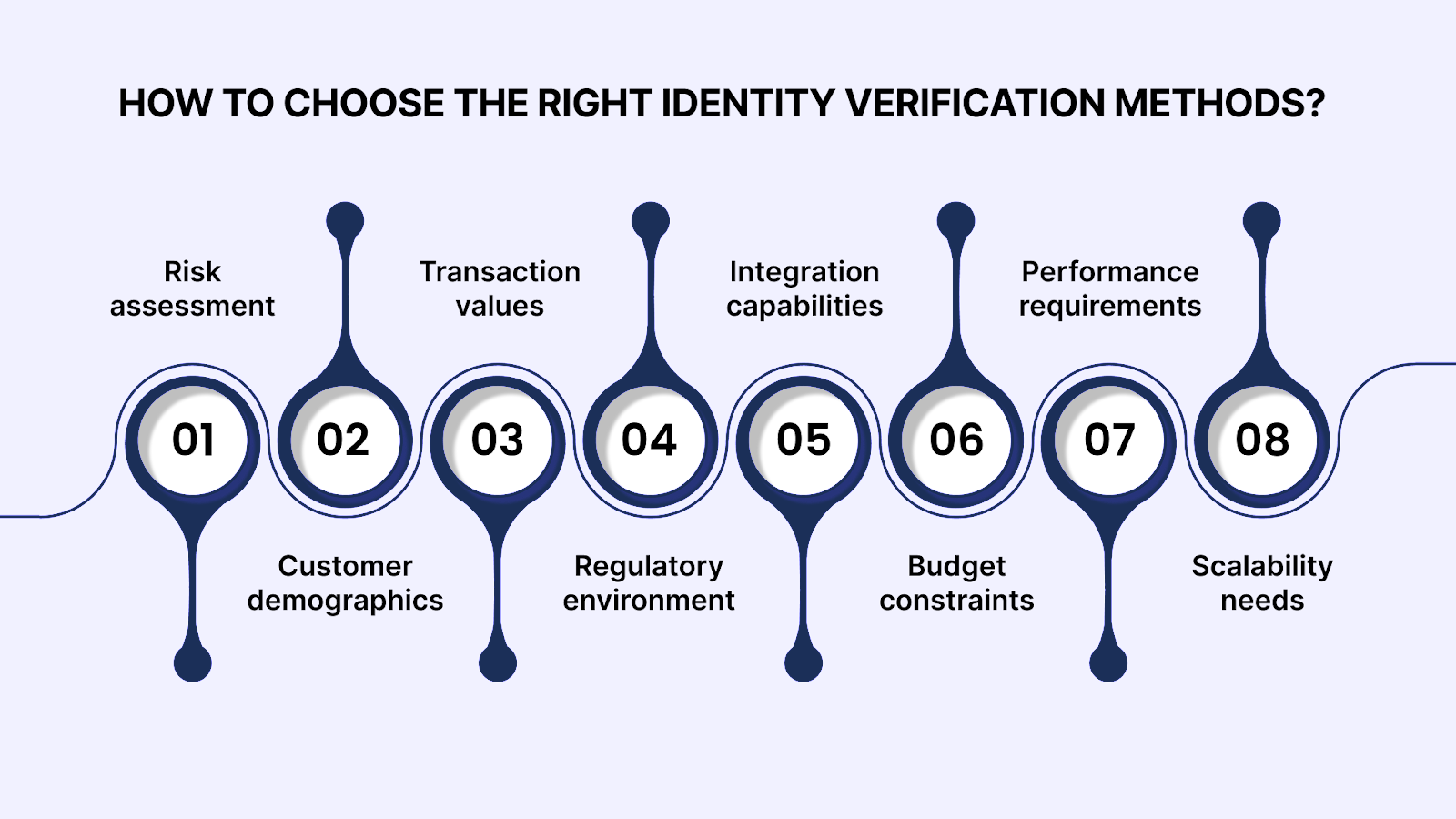

- Stronger verification processes that prevent impersonation and misuse.

- Early detection systems that help platforms identify and report scam offenses before they escalate.

Far from limiting innovation, these priorities are guiding digital education toward a more transparent and resilient future. As trust becomes integral to the design of AI-driven learning models, progress can continue to benefit both learners and institutions.

How Education Platforms Can Respond To Scam Offenses

Responsible adoption of AI in education begins with clarity and communication. Platforms have an opportunity to ensure AI enhances learning without enabling scam offenses.

Practical steps include:

- Verifying instructor identities and clearly communicating teaching sources to learners.

- Disclosing when AI-generated tools are involved in instruction or assessment.

- Using monitoring systems and fraud monitors to detect patterns associated with scam offenses early.

- Promoting digital literacy so learners understand synthetic media.

Together, these actions help platforms move beyond reactive responses and build resilient learning environments.

Key Takeaways From The EdTech Summit On Digital Learning Safety

As digital education continues to mature, conversations about safety are becoming just as central as conversations about innovation. Education leaders at our education conference emphasized how trust is built through openness, ethical design, and shared responsibility, especially as scam offenses tied to emerging technologies grow more complex.

Looking ahead, the Education 2.0 Conference remains a key forum for examining scam offenses associated with AI-driven education models and for sharing practical strategies to strengthen digital learning environments. Ongoing discussions highlight how verification tools and fraud monitors can reinforce platform resilience, supporting education providers as they continue to innovate while building confidence and trust across the learning ecosystem.

FAQs

1. What are deepfake educators in online learning environments?

A. Deepfake educators are AI-generated teaching figures designed to look and sound like real instructors. They are increasingly used in digital courses, recorded lessons, and virtual classrooms. Their realism can make it difficult for learners to recognize whether instruction is human-led or synthetic.

2. Why are deepfake educators becoming more common in digital education?

A. Education platforms are adopting AI-generated instruction to scale content delivery and meet growing global demand. These tools allow courses to be produced faster and delivered consistently across regions. Challenges arise when transparency and safeguards are not clearly established.

3. How do deepfake educators contribute to scam offenses?

A. Scam offenses can occur when synthetic instructors are presented as real educators with fabricated credentials. Learners may unknowingly enroll in courses based on misleading authority or unverifiable certifications. Weak disclosure increases the risk of misrepresentation.

4. Who typically attends the Education 2.0 Conference?

A. Attendees include educators, administrators, EdTech leaders, and solution providers. The diverse audience enriches discussions. Multiple perspectives contribute to balanced insights.

5. How does the venue experience enhance attendee engagement?

A. The venues are selected to support comfort, interaction, and focused discussion. Shared spaces encourage spontaneous conversations. This environment enhances collaboration throughout the event.